Hundreds of free tutorials

Learn Godot with the largest base of free learning resources. We have everything you need to get started.

Godot Tours: 101 - The Godot Editor

Godot Tours allows you to learn interactively, step-by-step, directly inside the Godot Editor. In this first completely Free Tour, we take you on a quick guided walk through the user interface and help you find your way around the editor and break the ice with Godot.

Learn GDScript From Zero

Learn to code from zero with Godot’s GDScript programming language. A free and open-source, 10-hour interactive course!

Free and Open-Source tools

We made over 100 open-source Godot demos and tools to help you learn to make games faster.

Godot Procedural Generation

A growing collection of PCG algorithms in Godot.

Godot Shaders

Open-Source 2D and 3D shaders for the Godot game engine. All coming with complete demos.

Latest news

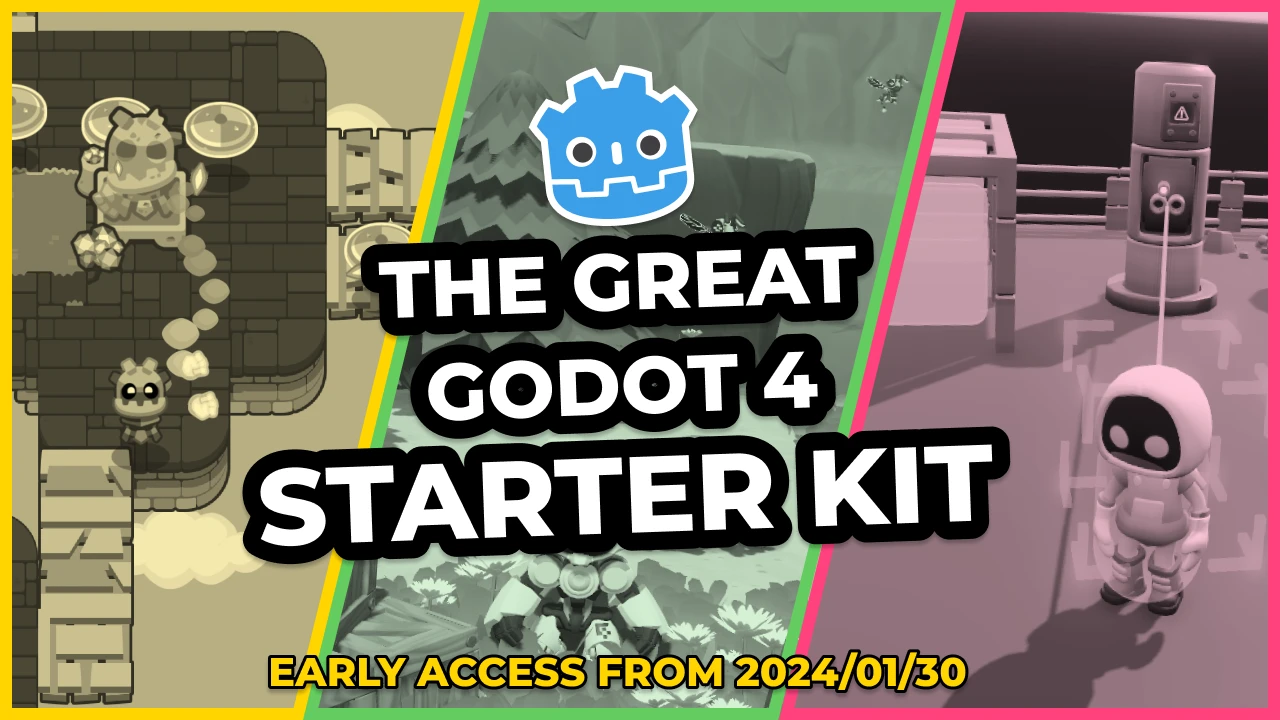

Godot 4 courses are releasing in Early Access starting Jan 30!

Lots of good news to unwrap this time around! The Godot community is in for an exciting winter.

We're Discontinuing the Golden Ticket to ALL Godot Courses

We’re discontinuing the Ultimate Bundle on July 15, at midnight UTC. See all the infos in this post.

Ready to get started?

Take your game creation skills to the next level for free, with Godot.

Get started for free